The Slow Takeoff

If you work on a computer, this is about you. Plus: Real videos rock politics while AI amplifies the story, the Epstein Files podcast, and more.

Someone in your office is suddenly finishing work at twice the speed and being very quiet about it. They probably found the agents. This piece is about what they know.

I’ve been pattern matching on AI since November 25, 2022, when OpenAI released an improved model in a simple chat interface everyone could use. What I’m seeing right now feels like Groundhog Day. The models got really good in mid-November 2025. And the next interface paradigm, agents that do stuff reliably on a computer, is here.

Below, we’ll talk about what this means, and what is and isn’t happening.

The Signals This Week

Five signals of the future of opinion shaping and what they mean for people inside the Beltway.

1. Real Videos Rock Politics. AI Content Amplifies the Concern.

Semafor documents how authentic footage, not deepfakes, drove the political crisis engulfing the Trump administration.

Many predicted that AI-manipulated videos would destabilize American politics in 2026. Instead, the political crisis engulfing the Trump administration has been driven by authentic footage: ICE agents filmed on TikTok, Instagram, and YouTube roughing up immigrants and citizens, crashing into suspects’ vehicles, confronting crowds behind masks.

Ben Smith writes that real videos of the Alex Pretti shooting and immigration raids drove the narrative and shifted polling that forced Congressional leaders to break with the White House. AI-generated content circulated alongside it, amplifying existing public concern and pushing the story further into feeds.

Something to think about: The gravity well of attention still pulls toward authenticity when the stakes are high enough. AI content can amplify a narrative’s reach and intensity, but real footage with real emotion still moves real politics. For campaigns building rapid response infrastructure, the priority remains capturing and distributing authentic moments at speed. AI remains the accelerant, not the source for now. Source: Semafor

2. Moltbook Gets Its Reality Check

MIT Technology Review publishes the postmortem on AI social media’s viral debut.

As we covered last week, the AI social network attracted 1.7 million agents in days. The verdict from Vijoy Pandey, SVP at Outshift by Cisco: “It looks emergent, and at first glance it appears like a large-scale multi-agent system communicating and building shared knowledge at Internet scale. But the chatter is mostly meaningless.” Connectivity alone is not intelligence.

There was also more human involvement than it appeared. Fake posts placed by people to advertise apps. Humans (like me!) pulling strings behind their agents.

Meanwhile, my own agent work continues. I gave Clawrence an email inbox. He sends me morning updates now on the news I care about and gives me a summary of what he does at the end of the day.

3. The First AI-Generated Super Bowl Ad Arrived. The More Interesting Story Is What Chatbots Remember.

ADWEEK and Emberos track which ads AI systems cite after the game, revealing a new hierarchy of memorability.

Svedka ran what it calls the first “primarily” AI-generated national Super Bowl spot. The 30-second ad features AI-rendered robots dancing at a party. It took four months to produce. AI can generate remarkable visual content and this was not an example of that.

The more consequential story was the GEO results from the Super Bowl. ADWEEK partnered with AI visibility startup Emberos to track which Super Bowl advertisers showed up in AI chatbot responses after the game. Not a single major AI company appeared in the top 20 most cited ads across ChatGPT, Claude, Gemini, Perplexity, and Grok, despite AI companies accounting for 23% of all Super Bowl commercials. Consumer brands dominated: Xfinity, Bud Light, Squarespace, Ramp, Dove, and Volkswagen.

Rankings varied by 20% to 30% depending on which AI model you asked. ChatGPT favored ads that could be explained clearly in conversation. Claude prioritized emotional resonance and purpose. Perplexity leaned on citations and press coverage. Grok amplified ads that sparked controversy or humor. Same ads, meaningfully different framing depending on the model.

Something to think about: Ad memorability in the AI age is determined less by spend and more by how easily a campaign can be summarized, cited, and reinterpreted by machines. If your message doesn’t translate cleanly into how LLMs process and recall information, it functionally doesn’t exist in a growing share of discovery conversations. The model your audience uses shapes what they learn about you. Source: TechCrunch | ADWEEK / Emberos

4. A Theory of the Post-Human Internet and What It Means for PR Campaigns

Paul Finney outlines “instance commerce” where AI agents, not humans, become the primary audience for persuasion.

Paul Finney published a working thesis on what commerce looks like when AI agents become the primary internet users. The entire digital economy runs on one bottleneck: human attention is scarce. What happens when the thing evaluating your pitch, comparing your positioning, and making the recommendation has unlimited attention?

Finney’s framework introduces “instance commerce.” Instead of selling products, brands sell simulation-ready bundles: packaged possible futures that an agent can test-drive before committing real dollars. An AI agent of the future probably won’t impulse-buy. It runs simulations, evaluates constraints, and scores outcomes. An impression shown to a human costs $10 in value because attention is scarce. An impression shown to an agent costs $1 because compute is abundant.

Apply this to public affairs. Today, a PR campaign targets journalists, Hill staff, and stakeholders with press releases, briefing documents, and earned media hits. In Finney’s post-human internet, those same campaigns also need to persuade the agents that mediate information for those audiences. The agent browsing the web on behalf of a congressional staffer evaluates your policy position against competing sources with none of the cognitive shortcuts that make traditional persuasion work: no emotional appeals, no social proof from a well-placed quote. Just structured claims evaluated against constraints.

5. One Developer Turned Claude into an Investigative Podcast Engine

Levy.eth’s 64-episode Epstein Files series hit 100,000 downloads in week one—20x the top 1% podcast threshold

Levy.eth uploaded the Epstein files to Claude over a weekend and asked it to build a podcast connecting millions of data points across 64 episodes. The result: 100,000 downloads in seven days.

For context, the top 1% of podcasts globally get around 5,000 downloads in their first week. The average podcast gets 141 downloads in 30 days. Most never crack 1,000 per episode. There are 4.5 million podcasts competing for attention.

No production team. No studio. Just Claude, a Mac Mini, and focused curation. The series is live on Apple and Spotify with full sourcing.

Something to think about: When one person with AI can outperform 99% of podcasts by synthesizing public documents, the bottleneck shifts from production to editorial judgment. Legacy newsrooms spend months coordinating teams for complex investigations. AI collapses that to days when the data is well curated.

The Slow Takeoff

In late 2025, multiple independent data sources and thought leaders started telling the same story at the same time: We are officially in the slow takeoff of AI capability.

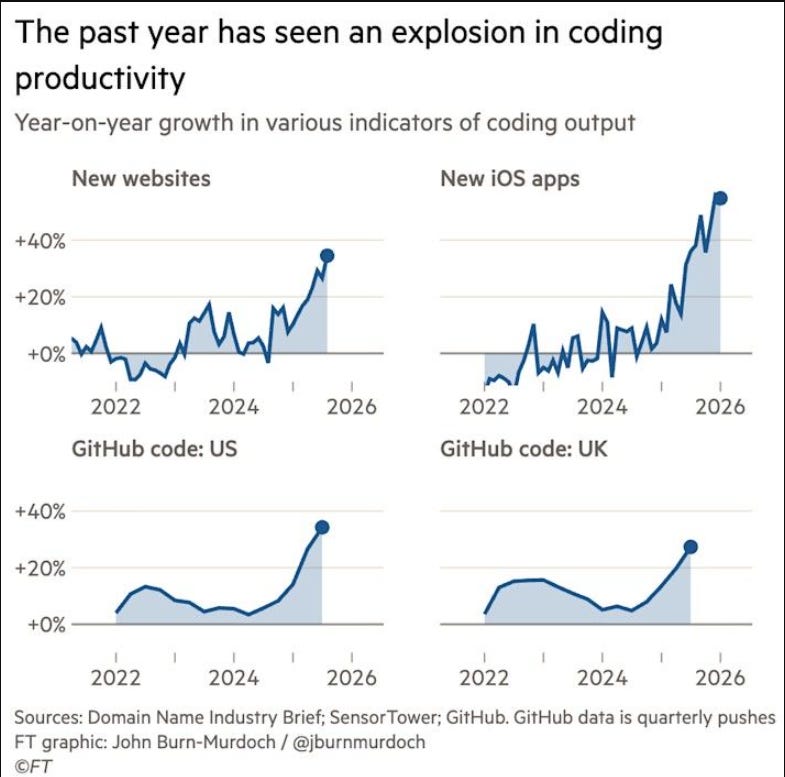

Let’s start by cutting through the hype. The Financial Times published analysis by John Burn-Murdoch showing a sharp inflection in software productivity. From 2022 through mid-2025, all of these series were flat or mildly positive. Around late 2025, every line bends sharply upward at once. New websites grew roughly 35% year-on-year. New iOS apps surged over 50%. GitHub code pushes in the US and UK climbed 25% to 35%.

This is best described as a step function: a reset to a higher slope, not a smooth acceleration. Coding productivity was stuck on a plateau until it wasn’t.

Source: Financial Times | Carl Benedikt Frey on LinkedIn

Agents Hit Their Stride

The inflection coincides with two things:

Agentic assistants that take ownership of entire work-streams

Better models that mostly reduce ‘slop’ in code bases instead of adding it

Matt Shumer framed the moment well in his now viral article titled, “Something Big is Happening.”

“Let me make the pace of improvement concrete, because I think this is the part that’s hardest to believe if you’re not watching it closely.

In 2022, AI couldn’t do basic arithmetic reliably. It would confidently tell you that 7 × 8 = 54.

By 2023, it could pass the bar exam.

By 2024, it could write working software and explain graduate-level science.

By late 2025, some of the best engineers in the world said they had handed over most of their coding work to AI.

On February 5th, 2026, new models arrived that made everything before them feel like a different era.

If you haven’t tried AI in the last few months, what exists today would be unrecognizable to you.”

Journalists and commentators have offered many valid critiques of Shumer’s article. Sharon Goldman in Fortune put it well:

“… I probably would have pushed back on the breathless tone (“bigger than Covid”), the hyped-up framing (“The people I care about deserve to hear what is coming, even if it sounds crazy”), and the sweeping claims built on shaky assumptions (“We’re telling you what already occurred in our own jobs, and warning you that you’re next”).”

But Goldman and many others critique hype-language and over-ambitious timelines. It’s hard to argue this moment as anything but remarkable.

Christmas Miracle

I would argue ‘slow takeoff’ was precipitated by an overlooked event. Between Christmas and New Years, Anthropic doubled its usage limits for Claude Code. This was a highly subsidized welcome party for its most capable model (Claude Opus 4.5) and its coding agent, Claude Code, introducing many laypeople to agents for the first time. A rising tide lifts all ships. Demand surged for OpenAI’s answer to Claude Code, Codex, and it prepared the ground for public experiments in agents.

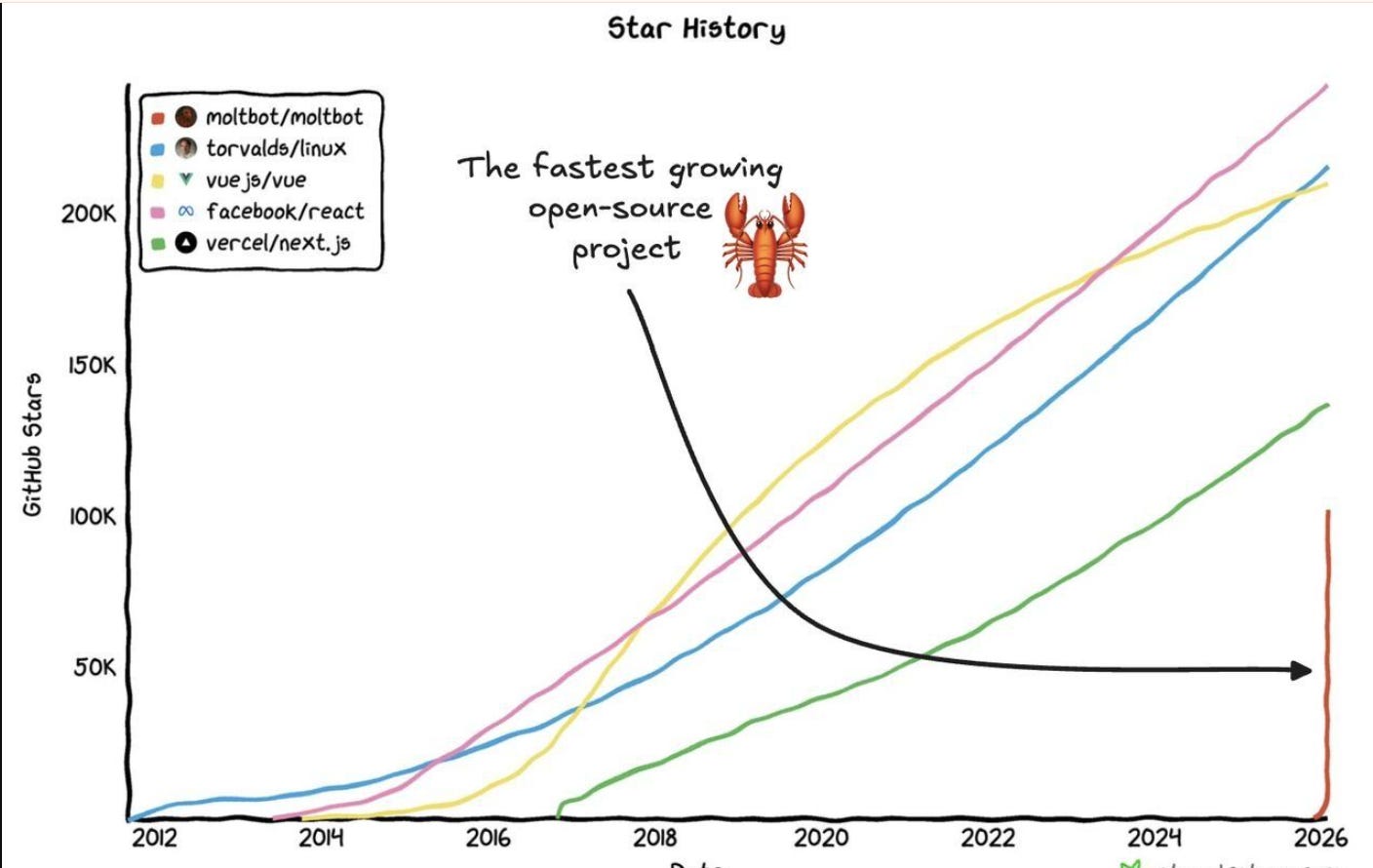

Sure enough, less than a month later, OpenClaw hit an unheard of near-vertical GitHub star curve, with over 100,000 stars in weeks. It should not be lost on anyone that it was created by a single person with his army of Codex coding agents.

From the Frontier: Greg Brockman on the Step Change

Greg Brockman, OpenAI’s co-founder, wrote that software development is “undergoing a renaissance” and that “since December, there’s been a step function improvement in what tools like Codex can do.”

By the end of March, OpenAI is targeting two things:

For any technical task, the default is interacting with an agent rather than using an editor or terminal,

The default agent workflow is explicitly evaluated as safe but productive enough that most tasks don’t need additional permissions.

Brockman’s internal recommendations read like a playbook for organizational transformation. Designate an “agents captain” for each team. Create AGENTS.md files that document how agents should operate within a project. Inventory internal tools and make them agent-accessible. Structure codebases to be agent-first. And critically: “Say no to slop.” Ensure a human is accountable for any code that gets merged, maintaining at least the same review bar as human-written code.

But Why Does this Matter?

The tools that are transforming software development operate on file systems. Every public affairs professional works in these same environments: file systems full of press materials, CRMs full of contact data, monitoring dashboards, approval workflows, email inboxes.

We didn’t fully realize it, but the file system you work in every day could now be accessible to agents. The same capability that lets Codex write and debug code lets an agent draft a press release, cross-reference it against your messaging guide, check it for compliance, and route it for approval. If you don’t believe me, read this fascinating take on companies as filesystems.

Brockman’s playbook for OpenAI translates directly to any company.

Designate someone on your team to think about how agents fit into your workflows.

Pick your agent of choice.

Document your processes into skills.md so agents can execute them.

Make your internal tools accessible—the main barrier to agents doing good work is a lack of context.

Maintain, security, safety, and quality standards. The volume of output an agent can produce demands even more rigorous human judgment on what gets published.

The slow takeoff is here. Are you airborne yet?

Thanks for reading this edition of The Influence Model. Reply and let me know what you think about the slow takeoff, or what you’re seeing in your own work.

And if someone on your team should be reading this, forward it their way.

Best,

Ben

*An earlier version of this post suggested Sharon Goldman was with Financial Times. That was a hallucination by this author and it has since been corrected to Fortune.

Hey Ben, thanks - but I am with Fortune, not Financial Times! 😔